Memory on the iPhone

Now here is the question: How much memory does the iPhone have? How much memory is OK for applications to

use? Curious as I am I did some research and wrote a little test project. This got me some odd results.

Now here is the question: How much memory does the iPhone have? How much memory is OK for applications to

use? Curious as I am I did some research and wrote a little test project. This got me some odd results.

According to what you find on the net the iPhone has a total memory size of 128 MB and your application should not use more than 46MB of it. But what happens exactly when you try to obtain more?

So I wrote a little application that continuously allocates more and more memory. In fact it mallocs/frees the memory on every timer tick.

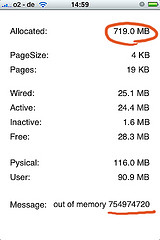

Now this is where it gets odd. While the sysctl() calls do report something around 128MB of RAM I can malloc() way beyond this. In fact calling malloc(700000000) does not fail at all! When I run the application on just the iPhone it will stop at around 719MB. When I run it through Instruments the whole devices freezes at around 46MB. This has been reproduced on 2.1 and 2.2 on different devices.

- (void)tick

{

allocated = allocated + size;

if (allocatedPtr) {

free(allocatedPtr);

}

allocatedPtr = malloc(allocated);

if (!allocatedPtr) {

NSLog(@"out of memory at %ld", allocated);

...

Later I found someone who stumbled across the same thing.

Lazyweb: what is going on here?

You can download the test application here. But as a disclaimer: you run this code on your own risk!

Update:

So afterall this means the result of malloc() has a different semantic of what I expected. Adding the following piece of code makes the program behave and gives the expected

result.

long *p = (long*)allocatedPtr;

long count = allocated / sizeof(long);

long i;

for (i=0;i<count;i++) {

*p++ = 0x12345678;

}

So it turns out if you allocate (and use!) around 46-50 MB in your iPhone application it will just get terminated.